How to Hire Engineers

Most engineering hiring is broken. Not just suboptimal — broken in ways that actively filter out the people who are best suited to do the work. After hiring dozens of engineers across seven companies, and making more than a few painful mistakes, you start to see the patterns.

This is a practical guide to what actually predicts success, why the standard process fails, and how to design a hiring loop that consistently finds people who can do the job.

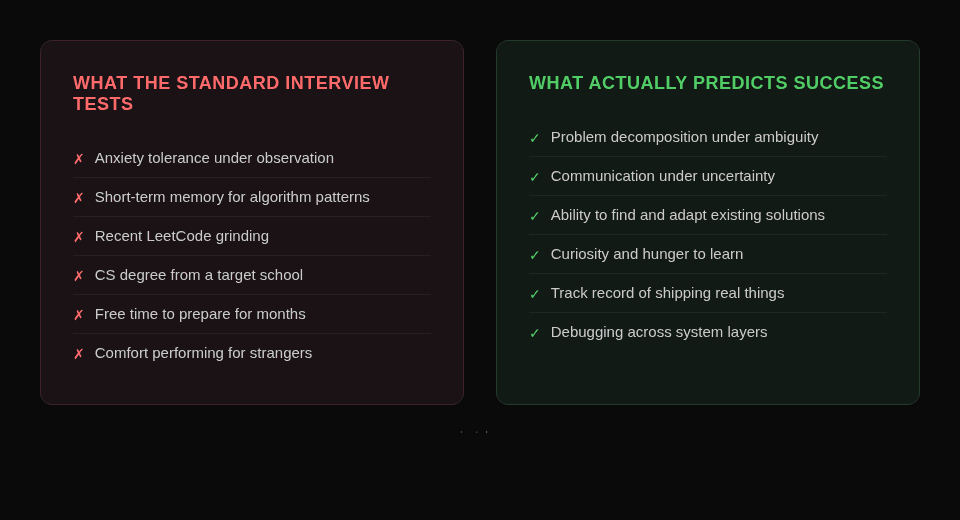

The theater we've convinced ourselves is science

You probably know this process:

- Recruiter screen to confirm you're a real human.

- Technical screen: LeetCode-style problems under time pressure, often in a shared editor while someone watches.

- Onsite: four to six interviews — more algorithms, maybe a system design question, a behavioral round.

- Debrief.

- Decision.

This spread because Google and Facebook used versions of it in the 2000s. It propagated through engineering culture as a meme, not as a validated, evidence-based practice.

The underlying logic was never "this predicts job performance." It was "smart people do well at hard problems, so we'll make hard problems."

The research is damning:

- Google's own head of People Operations, Laszlo Bock, studied tens of thousands of interviews internally and found "zero relationship" between interview scores and job performance. His words: "It was a complete random mess." Google — the company that popularized this style of interview — proved it doesn't work, and the industry kept doing it anyway.

- NC State and Microsoft researchers found that candidates in private coding interviews performed twice as well as those being observed on a whiteboard. The kicker: all women in the public interview condition failed. All women in the private condition passed. The interview wasn't measuring ability — it was measuring anxiety tolerance under performative stress.

- interviewing.io analyzed thousands of real interviews and found that over one-third of high-scoring candidates failed at least one interview in the same process. Their CEO, Aline Lerner, put it plainly: "Technical interviewing is a process whose results are nondeterministic and often arbitrary." Completing 5+ mock interviews roughly doubles your pass rate regardless of underlying ability — meaning the interview is testing preparation, not skill.

What you've actually built is a filter for people who already play the game — not for people who can do the job.

And the game self-perpetuates. The creator of Homebrew — a tool used by the majority of Google's engineers — was rejected by Google for not inverting a binary tree on a whiteboard. When he tweeted about it, the replies split exactly the way you'd expect. One camp: "Hiring isn't broken, dude. You just don't interview well". Another: "It weeds out the fakers who can't do it given any amount of time". A Microsoft Research study analyzing 46,000 developer comments found the same pattern: engineers who passed the gauntlet defend it; everyone else calls it theater. It's hazing logic dressed up as meritocracy. The people who benefit from the system defend the system, and the filter tightens.

Here's who wins in that system: engineers with the free time to spend a month doing zero productive work while memorizing problem patterns they'll almost never encounter in production. Not the engineer supporting a family. Not the career changer working a day job while learning to code at night. Not the senior engineer who's been shipping real systems for a decade and hasn't touched a binary tree since college. The people who get the best jobs under this model are the ones who can afford to play the game — and that's a selection for privilege, not for ability.

Now — I want to be fair. There are real things that come from doing these problems. Recursion is fundamental. The problem-solving instincts you build from working through two-pointer and sliding-window problems are genuinely useful. Understanding time complexity matters. But all of that is best learned in a classroom, internalized once, and then applied as-needed in the real world. I wrote an A* pathfinding algorithm for a dungeon builder over a weekend in college. Haven't used it since. There have been times I've needed recursion in production, but it was never the same problem I'd solved on a whiteboard — it was always shaped by the specific domain, the specific data, the specific constraints. The real skill isn't knowing the pattern. It's recognizing when a pattern applies to a new problem and adapting it. And no LeetCode grind teaches that — experience does.

What actually predicts whether someone will be good

Over time, and with enough feedback loops, a different set of predictors emerges.

Problem decomposition

Can this person take an ambiguous problem and break it into pieces they can reason about?

This is not about knowing the answer. It's about:

- Identifying what they know and what they don't.

- Surfacing assumptions explicitly.

- Defining sub-problems that make the bigger problem tractable.

In real engineering environments, problems are always underspecified. Someone who freezes when the problem is fuzzy will struggle.

Communication under uncertainty

Can they think out loud in a way that's useful?

You're looking for:

- "I'm not sure, but my intuition is X because of Y" instead of silence or fake confidence.

- The ability to narrate trade-offs, not just produce answers.

Real engineering is collaborative. You're not hiring someone to code alone in a room. You're hiring someone who will sit in planning meetings, debug with teammates, write design docs, and explain trade-offs to non-engineers. Communication is not a soft skill; it's the skill.

The ability to find an answer

This is different from curiosity, and it's different from knowledge. It's a practical skill: given a problem you don't immediately know how to solve, can you efficiently find a path to the answer?

That might be an npm package that does exactly what you need. It might be a blog post from someone who solved this problem two years ago and wrote up what they learned. It might be reading the source code of a library instead of guessing at the docs. It might be a Stack Overflow thread where the second answer is better than the accepted one and saves you a week.

The best engineers I've worked with timebox this instinctively. They don't disappear for three days "researching." They spend 30 minutes pulling threads, find the prior art, and come back with something concrete: "Someone at Shopify hit this exact problem in 2023, here's what they did, here's what I'd change for our case." Or they find an old implementation and realize the world moved on — "This was 30 interfaces, an implementation layer, and a factory pattern that was overkill even at the time. We can do this in 10 lines now."

The anti-pattern is the engineer who either (a) tries to solve everything from first principles like nobody has ever written software before, or (b) gives up and immediately asks someone else without spending 20 minutes looking. You want the one in the middle — the one who checks whether the problem is already solved, learns from what others tried, and adapts it rather than reinventing it.

In an interview, this is easy to test. Give them a problem they won't know off the top of their head and tell them they can use whatever resources they'd normally use. Watch what they search for, what they skim versus what they read carefully, and how quickly they synthesize what they find into a direction. That 15-minute window tells you more about their daily productivity than any algorithm question ever will. In 2026, that might look like the candidate prompting Claude or Gemini to pull a list of approaches with trade-offs while they simultaneously skim a few articles and docs on their own. The AI results come back, they cross-reference against what they found manually, and then they explain their gut — which approach they'd pick, why, and where they'd dig deeper before committing. That's not cheating. That's how the best engineers actually work now, and if your interview process doesn't allow it, you're testing for a version of the job that doesn't exist anymore.

Curiosity and the hunger to learn

If I had to pick one trait that predicts long-term engineering success above all others, it's this: the drive to understand how things actually work and the hunger to keep learning after the job description stops requiring it.

The fastest-growing engineers are the ones who, when they hit a bug they don't understand, go deeper instead of just finding a workaround. They want to know why. They teach themselves new tools on weekends not because someone told them to but because they couldn't stop thinking about a better way to solve the problem from last Tuesday. They read source code for libraries they use because the docs weren't enough.

You can teach someone a framework. You can't teach someone to care about getting better.

This drive is hard to teach and easy to spot:

- Ask them to walk you through something they built.

- Listen for where they light up.

- The ones who get animated about the interesting problem in the middle — the tricky race condition, the data modeling trade-off, the UX constraint, the performance bottleneck they couldn't let go of, the fuzzy matching system where they layered multiple algorithms with their own weighting because no single one was good enough, the test suite that went above and beyond and baselined performance on every staging push so they could see if a change impacted execution time before it ever hit production — are usually the ones you want.

Track record of shipping

Not a track record of working at prestigious companies. A track record of actually delivering things that worked.

These are different:

- I've met senior engineers from name-brand companies who'd never shipped a feature end-to-end without three other people carrying them.

- I've hired bootcamp grads who built and deployed three projects in six months, owned their own DevOps, and iterated on user feedback.

One of those is a much better predictor of what happens on your codebase next quarter.

I've worked with teams that built tools that never saw the light of day — or worse, got hired for projects that got canned halfway through, repeatedly. And then I've worked with people who shipped everything they pushed on. It might not have been as polished as they wanted, or it had delays, cuts, and necessary concessions along the way — but it shipped.

A caveat here: shipping isn't always in the engineer's control. Your engineer often isn't the one gatekeeping whether the product goes live. Organizational dysfunction kills projects regardless of how good the code is. So don't penalize someone for a canceled project — dig into what they built and why it didn't land.

But in 2026, an engineer should be able to ship something on their own. Get a side project onto Vercel, GitHub Pages, an app store — somewhere you can see it running. It doesn't have to be impressive. It has to exist. If they've been deep inside big corp, you probably won't see much on their GitHub — NDAs and proprietary codebases make that hard. That's what take-home projects and code samples are for. And I'll be honest: there were years where I didn't have much to show outside of work either, because the job was eating all the hours. But the barrier to shipping something small has dropped so dramatically — a weekend and a Cursor subscription can get a working app deployed — that "I don't have anything to show" is a harder excuse to accept than it used to be.

The diamonds-in-the-rough philosophy

Some of the best engineers I've hired were people other companies had already passed on, or would never have considered.

Examples:

- Bootcamp graduates who came in with fire.

- Career changers who brought domain expertise that made their technical work dramatically more valuable — the nurse who became a health-tech engineer, the accountant who became a fintech developer. I know theology PhDs who became high-tier architects. One of the best engineers I worked with was a history major headed for law school who detoured into pre-med, got sidetracked by a CS course, and became self-taught while still chasing that second degree. Before long he was consulting and building software full-time. The non-traditional path didn't make him worse — the breadth made him better.

- Self-taught engineers who built real things because they wanted to, not because a curriculum told them to.

Traditional hiring filters route these people to the reject pile before anyone looks at their work. That's a mistake. And honestly — for years I had more success hiring from accelerated programs and self-taught backgrounds than from traditional four-year CS programs. The university hires had a 50% attrition rate quarter over quarter. The ones who taught themselves and clawed their way in tended to stick.

Now, entry-level is brutal right now and it's going to be hard for these people to get their foot in the door. But I'd personally invest in entry engineers who are learning and growing with the tools — including AI tools — over someone with a credential and no curiosity. The short-sighted approach is to only hire seniors because juniors "cost too much to train." The long-term approach is to realize that every senior you want to hire in three years is a junior someone invested in today. (I have a whole post coming on how short-term thinking is eroding engineering quality across the industry, but that's a different rant.)

The shift you need to make is simple but nontrivial:

Evaluate the work, not the path that produced it.

Real evidence: GitHub profiles. Side projects. Deployed applications. Open-source contributions.

Weak evidence: A CS degree from a target school ("good at school fifteen years ago").

Pedigree isn't irrelevant, but it's massively overweighted. The people who pay the price for that overweighting are often those who didn't have access to traditional paths:

- First-generation college students.

- Career changers.

- People from underrepresented groups who faced friction entering the credentialed pipeline.

Hiring from this pool is not charity. It's a competitive advantage, because you're finding talent the standard filter discards.

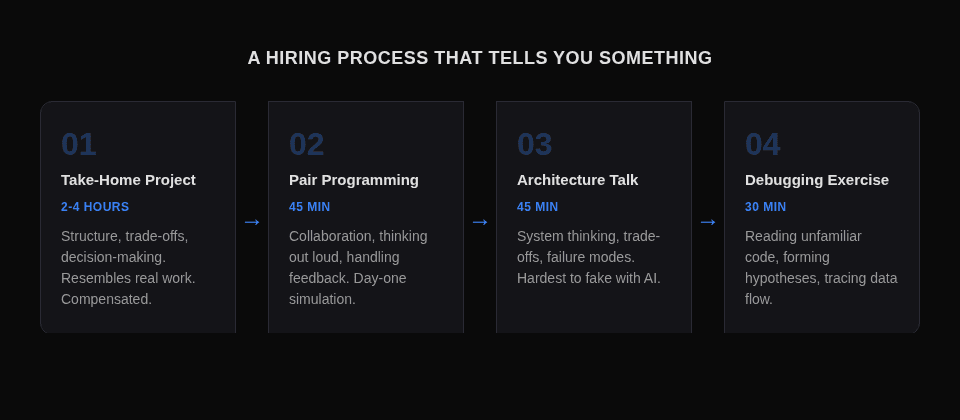

How to structure interviews that tell you something

Here's the process I've landed on after years of iteration.

1. Take-home project

Not a LeetCode problem. A small, realistic project scoped to 2-4 hours of work.

Characteristics:

- Resembles actual work you do: a small API, a data processing task, a UI component with real requirements.

- Open-book: real engineers use documentation.

- Compensated if at all possible — or at least offer a stipend. Their time has value.

How to evaluate:

- Review before the next interview.

- Look at structure, not just correctness: Does the code communicate clearly? Did they make reasonable trade-offs? Did they handle edge cases? Did they include a README explaining decisions?

The submission tells you a lot. The conversation about the submission tells you more.

2. Pair programming session

The goal is not to see if they can solve a hard problem under pressure. It's to see how they think collaboratively.

Give them a problem related to their take-home submission — an extension, a refactor, a bug you've seeded. Work on it together. You're not evaluating whether they solve it. You're evaluating:

- Do they ask clarifying questions before diving in?

- Do they narrate their thinking or code in silence?

- When they get stuck, do they try something new or lock up?

- Can they take a suggestion and run with it, or do they get defensive?

The pair session simulates day-one on the job better than any whiteboard exercise. If they pair well with you for 45 minutes, they'll pair well with your team for 45 weeks.

3. Architecture conversation

Skip this for junior hires. For mid-to-senior engineers, give them a real system design problem — ideally one your company actually faces.

Not "design Twitter" — that's another standardized performance piece. Instead: "Here's a problem we're dealing with. We have X users, Y data model, Z constraint. How would you approach it?"

Listen for:

- Do they ask about the constraints before drawing boxes?

- Do they consider failure modes?

- Can they reason about trade-offs between consistency, availability, and complexity?

- Do they acknowledge what they don't know?

The best answer to a system design question often starts with "it depends" — followed by three smart questions about what it depends on.

This matters more now than it ever has. In the age of AI-assisted development, any engineer with a Copilot subscription can produce syntactically correct code. What AI can't do is architect. It can't make the system-level trade-offs between consistency and availability. It can't reason about what happens when the thing you built last month interacts with the thing someone else is building next month. It can't decide which problems are worth solving and which are distractions. System design is the interview stage that's hardest to fake and most predictive of what someone will actually contribute to your codebase.

And here's the irony: AI is basically your LeetCode kid. It loves to solve the problem from scratch. It doesn't check whether Redis already handles your caching layer, or whether there's an npm package that does the reward system you're about to hand-roll. It produces a solution — often a verbose, over-engineered one — and moves on. It needs guidance. It needs an architect. The engineer who knows when to use the tool that already exists, when to let AI generate the boilerplate, and when to step in and make the decision AI can't — that's who you're hiring for now.

(If you've read my posts on orchestrating 35 AI agents — this is exactly the problem I hit. The agents will happily roll their own solution every time unless you build hard rules that force the question: "Does something already exist for this?" When it does, the task gets kicked to a human queue and I make the call before it moves on. The same instinct you want in an engineer — check before you build — is the same instinct you have to enforce in AI. The difference is a good engineer does it naturally.)

4. Debugging — the most underrated interview signal

Almost nobody includes a debugging exercise in their interview loop, and it's one of the most informative things you can do.

Give the candidate a small codebase with a real bug in it. Not a trick — a genuine bug that requires reading code, forming a hypothesis, and testing it. Watch how they approach it:

- Do they read the code first or start changing things immediately?

- Do they form a theory before they start debugging, or do they trial-and-error?

- Can they trace data flow through a system they didn't write?

- When their first hypothesis is wrong, do they adjust or get stuck?

This simulates what engineers actually do 40% of their working hours. If you can't debug, you can't ship — because nothing works the first time and the person who can find and fix the problem is worth more than the person who wrote the original code. AI can help debug a good deal of problems — but set it loose on an entire codebase with multiple layers and services, and it'll burn cycles iterating on one small piece of code when the real answer was in the server logs three levels up. I've watched my agent swarms spin for 20 minutes on a fix that took me 30 seconds once I pulled the actual error from the deployment logs. The engineer who knows how to debug across layers — pull logs from the API, the database, the CDN, the build pipeline — and triangulate where the real problem lives is the one who keeps everything moving, including the AI tools.

Moving fast on top candidates

Top engineers interview with 3-5 companies simultaneously. In my experience, the average time from first interview to accepted offer for a strong mid-level engineer is about 2-3 weeks. If your process takes 6 weeks, you're not in the running. You're providing free interview practice.

What moving fast actually looks like:

- Offer within 3-5 days of the final interview. Not 2 weeks of internal deliberation. If you need 2 weeks to decide, you either don't have a clear rubric or you have too many decision-makers.

- First-pass scoring immediately after each round. Rate candidates A/B/C/decline the same day. Flag A-candidates for an expedited path.

- CEO and CTO alignment that top candidates can skip rounds. If I've done a deep technical interview and I'm confident, the candidate shouldn't have to do three more rounds to satisfy a process that was designed for the average case.

Speed is not just logistics. It's a signal. A fast, decisive process tells the candidate: this company knows what it wants and doesn't waste time. A slow process tells them: this company has internal dysfunction that will show up in every sprint planning meeting for the next two years.

The offer itself matters less than founders think. Yes, compensation needs to be competitive. But the top 10% of engineers are choosing between multiple competitive offers — they're deciding based on:

- The quality of the technical conversation they had with you.

- Whether they believe they'll learn something.

- Whether the mission is real or marketing.

- Whether the team seems like people who ship or people who meet.

If you mumble "we're building cool tech" and can't articulate why this year matters for the company, you'll lose them to the competitor who can.

Startup vs enterprise: different games

These are genuinely different hiring playbooks. Applying the enterprise playbook to a startup is slow death. Applying the startup playbook to an enterprise is chaos.

Startup hiring:

- Equity is part of the comp: 0.5%-3% for early engineers, explicitly tied to milestones. If you can't explain the equity story in two sentences, you'll lose every candidate who's done the math.

- Speed over perfection: a 50% hire who grows into 80% is better than a 90% hire who takes 4 months to find. Slow hiring kills momentum.

- Founding engineers (first 3-5) set the culture permanently. Hire for learning velocity and shipping discipline, not for years of experience.

- Culture is the founder's shadow. Every hire in the first 20 either amplifies or dilutes the DNA. Be deliberate about which one.

Note: Your pizza parties are not culture. Rebranding every weekend crunch as a "hackathon" is not culture. Culture is how you actually work — whether you press for good engineering while allowing the breathing room for it to happen. Grind without recovery isn't intensity, it's attrition, and your best engineers will leave for somewhere that understands the difference. I have a lot more to say about this — that's its own post, coming soon.

Enterprise hiring:

- Before you post a req, ask whether you truly need permanent headcount right now — or whether you're reacting to a spike. We've seen this play out repeatedly, and the COVID cycle was the most expensive lesson the industry has ever gotten. Amazon roughly doubled its corporate workforce between Q4 2019 and Q4 2021. Then cut 18,000 roles in January 2023. Google cut 12,000. Meta cut 10,000+ and closed 5,000 open reqs. Industry-wide, over 260,000 tech workers were laid off in 2023 alone. Sundar Pichai said it plainly: "We hired for a different economic reality than the one we face today." Zuckerberg admitted he "underestimated the indirect costs of lower priority projects." A lot of those decisions could have been "we can make do with X software in the meantime and bring on a small team to support it" or "let's staff this with contractors for the immediate need and slowly convert to full-time as we see what levels out." The cost of a bad permanent hire is 6-9 months of productivity. The cost of a 3-month contract that doesn't convert is just the contract.

- You can pay market rate plus benefits — don't try to compete on equity unless you're pre-IPO with a credible path.

- Process is slower and non-negotiable: compliance, multiple stakeholders, security clearances, background checks. Optimize within the constraints instead of fighting them.

- You're hiring for institutional fit, not growth potential. The question isn't "can this person grow into the role?" — it's "can this person navigate the org matrix and deliver within it?"

- Sourcing: referrals and specialized agencies, not cold-apply LinkedIn. The best enterprise engineers aren't applying to job posts. They're being recruited through networks.

The mistake I see most often — across the board — is companies continuing to run poor hiring practices without ever auditing whether they work, and not planning well when deciding between employees, contractors, and the other levers available. Every company has access to the same tools: full-time hires, contract-to-hire, fractional leaders, agencies, offshore teams. The ones that hire well aren't the ones with the biggest budget — they're the ones who've thought through which lever to pull for which situation and actually committed to a plan.

The best interview is working together

If I could pick only one hiring approach, it would be contract-to-hire.

No interview — not even a great one — tells you what it's like to actually work with someone. A two-to-four-week paid contract engagement does. You get to see how they communicate in Slack, how they handle ambiguity in real tickets, how they respond to feedback on a real PR, and whether they ask the right questions before building the wrong thing. They get to see whether your codebase is what you described, whether the team dynamic is healthy, and whether the work is interesting enough to commit to.

Both sides are auditioning. Both sides should be.

The logistics matter here. If you're offering benefits — health insurance, 401k, the full package — then a W-2 contract-to-hire with a defined conversion timeline (30-60 days) makes sense. The candidate gets the safety net, you get the trial period, and conversion is a formality if things are working.

If you're a startup and you're NOT offering benefits — which is common and honest — then keep them on a 1099 contract for the trial period. It's actually better for the candidate: they can deduct expenses, they maintain flexibility, and they're not locked into a W-2 arrangement where they're paying full tax rates without getting benefits in return. Be transparent about this. "We're doing a 4-week paid contract so we can both decide if this is a fit. If we convert, here's the comp package including benefits. If we don't, you've been paid fairly for your time and nobody wasted six months." That's a conversation adults appreciate.

The trial period should be long enough to see real work but short enough to respect their time. Two to four weeks is the sweet spot. Don't stretch it to three months — that's not a trial, that's a temp gig with commitment issues.

And keep the interview process short if you're doing contract-to-hire. One conversation to confirm they're not wildly misaligned, then start the contract. The work IS the interview. Don't make them do a 4-round interview AND a trial — that's disrespectful of their time and signals that you don't trust your own evaluation process.

If you don't want to manage the trial-period logistics yourself, there are agencies that specialize in exactly this — vetting engineers and placing them on a try-before-you-buy basis. G2i is one example; there are others. You'll pay a premium for the sourcing and vetting, but if it gets you a proven engineer without burning three months on a bad hire, the math works out. The agency handles the contract structure, the candidate gets a real engagement, and you get signal that no amount of whiteboard interviews can replicate.

The interview goes both ways

One thing that gets lost in every "how to hire" article, including this one: the candidate is also interviewing you.

A good engineer — especially one with options — is watching your process for red flags. A six-round interview gauntlet tells them your org can't make decisions. A take-home project with no compensation tells them you don't value their time. A "culture fit" round where someone asks "would you grab a beer with this person?" tells them your culture is a vibe check, not a value system.

And if they DO join and discover that "culture" means enforced factory-floor hours, mandatory fun, and pizza parties presented as a benefit — they'll be gone in 90 days, and they'll tell every engineer in their network why.

But the flip side is real too. Some of the best teams I've been part of had nothing to do with perks. It was a few people who genuinely cared about the problem, worked hard when it mattered, and knew when to call it. We'd finish a brutal sprint, someone would say "let's actually decompress," and we'd end up sketching the next idea on the back of a napkin between rounds of pool. Nobody mandated it. It just happened because the work was good and the people liked each other. I've been on plenty of projects that were hell at times — tight deadlines, shifting requirements, the kind of weeks where you're dreaming in code. But the ones that worked long-term were the ones where leadership made sure the downtime happened too. The intensity was real, but so was the recovery. That balance is what keeps good people around.

The trial period protects them too. A contract-to-hire engagement lets the candidate see the real codebase, the real team dynamics, and the real expectations before they commit. If your environment is genuinely good, this works in your favor — they'll convert because they've seen the proof. If your environment is trash, no amount of employer branding or foosball tables was going to retain them anyway. Better to find out in week two than in month six.

The best engineers aren't just looking for a job. They're looking for a place where they can do work that matters, with people who are competent, under conditions that don't burn them out. If you can offer that, the hire closes itself. If you can't, no interview process in the world saves you.

What not to do

I've made most of these mistakes. Listing them so you can make different ones.

Slow decision-making. An A-tier candidate waits 10 days for your offer and accepts somewhere else. The lost opportunity cost dwarfs whatever salary negotiation you were agonizing over.

Hiring for seniority, not fit. The "VP of Engineering from Big Tech" who can't ship at startup velocity. They're used to managing managers, not writing code. Different game. Make sure you're hiring for the game you're actually playing.

Filtering out the rough diamonds. Auto-rejecting on communication struggles means you're filtering for polish, not ability. Some of the best engineers I've worked with were rough in interviews and exceptional on the job. Give people a chance to show their work before you judge their presentation.

Hiring in your own image. Only hiring people who think like you makes the team brittle. No diversity of thought means no one catches your blind spots. The team becomes an echo chamber that ships your biases into production.

Ignoring reference calls. "He was fine on the technical evaluation" — but the ex-manager says "difficult to work with." Reference calls take 15 minutes and save 6 months of regret. Ask: "Would you hire this person again?" and listen to the pause before the answer.

Overweighting credentials. Stanford plus FAANG does not equal great engineer. It equals "had access to elite institutions." Some of the most overrated engineers I've encountered had perfect resumes. Some of the most underrated had no degree at all. Judge the work.

Not selling your story. Top talent wants impact and a learning path. If you can't articulate why your company matters, why this year is the inflection point, and what the engineer will learn in the first six months, you'll lose every competitive offer to the founder who can.

The multiplier effect of one great hire

In my experience, one A-tier engineer reduces your own coding hours by roughly 20%, enables 2-3 mid-level engineers to ship faster, and sets a quality bar that lifts the entire team. One B-tier misfire costs 6-9 months of productivity — the churn, the backfill, the trust repair, the technical debt they leave behind.

Hiring isn't "HR process." It's your core job as a CTO — and if you're a founder without a CTO, it's your most important architectural decision. I've seen this pattern at multiple companies — and lived it more than once. Hiring authority gets granted, then quietly walked back mid-process. Candidates are in the pipeline, then you're told to pause. A week later, you're told to start again. The chaos of on-again-off-again hiring is worse than not hiring at all, because you burn candidate trust and your own team's morale every cycle. If you're going to hire, commit to it. If you can't commit, don't start.

But more broadly — hiring full-time employees is only one lever, and a good CTO should be advocating for all of them. Corporate partnerships, consultants, contractors, fractional leaders, agencies — these aren't fallback options. They're tools, and knowing which one to reach for in which situation is a leadership decision, not an HR decision. The CTO's job isn't just "hire engineers." It's working with the other executives to figure out the right mix of talent for where the company actually is — not where the org chart says it should be.

And the number one thing this comes down to: set specific budgets, break them out, and stick to them. If you're in the leadership seat, you have to figure out what your runway is and look far enough ahead to make decisions that actually make sense. If you can't do that, you aren't leading anything — you're all in the same chaotic boat seeing where you drift. Startups and small businesses can be hugely impacted by small changes, and every pivot or product switch chasing PMF can be a massive budget hit. But that doesn't mean there shouldn't be some level of understanding — some percentage allocated to headcount, some guardrails on what gets spent where — so that realistic decisions can be made about personnel rather than creating a rotating door and burning money on hires you can't retain.

Your first five engineers set the culture permanently. Every hire after that either reinforces or erodes what you built.

You won't hire perfectly. I haven't. But I've learned to iterate faster: tighten the rubric after every round, debrief honestly about who you missed and who you misjudged, and build the feedback loop that turns hiring from a guess into a system.

Your interview process is architecture. Treat it like code — design it, test it, iterate on it, and throw away the parts that don't work. The investment in getting this right 10x's your hit rate and compounds every quarter.

Frequently asked questions

How long should the engineering interview process take?

Two to three weeks, max. Every day past that, you're losing candidates to companies that move faster. If you need two weeks of internal deliberation after the final round, you either don't have a clear rubric or you have too many decision-makers. Speed is a signal — a fast, decisive process tells the candidate this company knows what it wants.

Should I pay engineers for take-home projects?

Yes — or at minimum offer a stipend. You're asking someone to spend 2-4 hours of unpaid labor to prove they're worth talking to. Compensating them signals respect and widens your candidate pool to include people who can't afford to do free work for five companies simultaneously. The take-home project is one of the best interview tools you have, but only if you treat the candidate's time like it has value.

How do I hire engineers without a recruiter?

Referrals are your strongest channel — the best enterprise engineers aren't applying to job posts, they're being recruited through networks. Beyond that, contract-to-hire lets you evaluate engineers through real work instead of interviews. If you want help with sourcing and vetting, agencies like G2i specialize in try-before-you-buy placements. And don't overlook the diamonds in the rough — bootcamp grads, career changers, and self-taught engineers that traditional filters discard.

What should I look for in a junior engineer?

Curiosity and the hunger to learn, shipping discipline, and learning velocity — not years of experience. The short-sighted approach is to only hire seniors because juniors "cost too much to train." The long-term approach is to realize that every senior you want to hire in three years is a junior someone invested in today. Look for the ones who light up about the interesting problem in the middle of their own projects.

How do I evaluate engineers when AI can write code?

System design and debugging are the two interview stages that are hardest to fake and most predictive in the AI age. AI can produce syntactically correct code, but it can't architect — it can't make trade-offs between consistency and availability, and it doesn't check whether something already exists before rolling its own solution. The engineer who knows when to use the tool that already exists, when to let AI generate the boilerplate, and when to step in and make the decision AI can't — that's who you're hiring for now.

Is contract-to-hire better than direct hire?

For most cases, yes. No interview tells you what it's like to actually work with someone — a two-to-four-week paid contract does. Both sides are auditioning, and both sides should be. Keep the interview process short if you're doing contract-to-hire — one conversation to confirm alignment, then start the contract. The work IS the interview.

Related posts

- The Architecture Behind a 6,000% Throughput Improvement at Hertz — what it looks like when a great team is aligned on a hard problem

- The Week I Stopped Coding: Orchestrating an Army of AI Agents — how AI is changing what engineering leadership looks like

- What "Yes" Actually Means When You're Managing Teams Across Seven Countries (coming soon) — the blended-team side of building these teams

I've hired engineers across startups, consultancies, and Fortune 500 engagements for over 15 years. If you're building a team and want a second opinion on your hiring process, your interview structure, or a specific candidate decision, book 30 minutes. No pitch — just pattern-matching from someone who's made these mistakes already.

Enjoyed this? Share it:

Get one CTO-level insight per week

No spam, no fluff. Just one actionable insight on architecture, leadership, or scaling — straight from the field.

Subscribe via email

Need CTO-level leadership for your team?

Whether you're scaling engineering, navigating a transition, or need strategic technical direction, I provide executive leadership without the full-time commitment.

Relevant services: Fractional CTO • Strategic Sprint

Discussion

Questions, corrections, or thoughts? Leave a comment below.